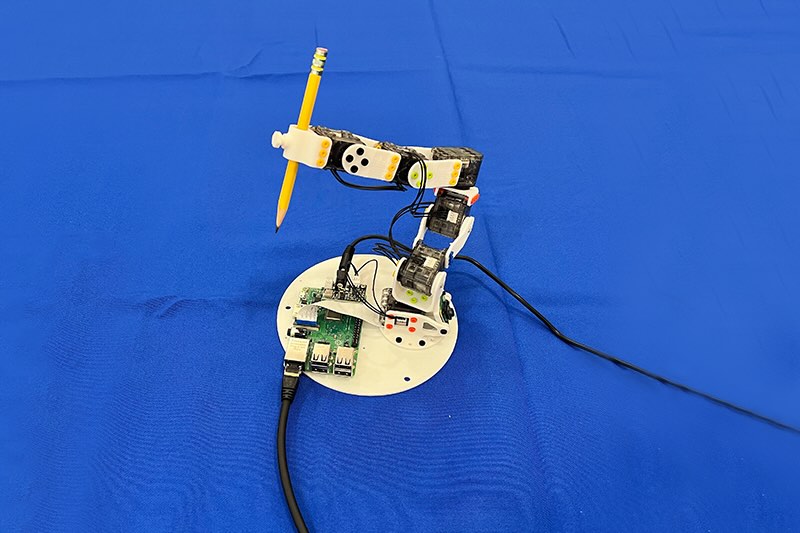

A 3D-printed robotic arm holds a pencil because it trains utilizing random actions and a single digital camera — a part of a brand new management system referred to as Neural Jacobian Fields (NJF). Moderately than counting on sensors or hand-coded fashions, NJF permits robots to learn the way their our bodies transfer in response to motor instructions purely from visible remark, providing a pathway to extra versatile, reasonably priced, and self-aware robots. | Credit score: MIT

In an workplace at MIT’s Pc Science and Synthetic Intelligence Laboratory (CSAIL), a gentle robotic hand rigorously curls its fingers to understand a small object. The intriguing half isn’t the mechanical design or embedded sensors — in reality, the hand incorporates none. As a substitute, all the system depends on a single digital camera that watches the robotic’s actions and makes use of that visible information to regulate it.

This functionality comes from a brand new system CSAIL scientists developed, providing a special perspective on robotic management. Moderately than utilizing hand-designed fashions or advanced sensor arrays, it permits robots to learn the way their our bodies reply to regulate instructions, solely by way of imaginative and prescient. The strategy, referred to as Neural Jacobian Fields (NJF), provides robots a type of bodily self-awareness. An open-access paper in regards to the work was revealed in Nature on June 25.

“This work factors to a shift from programming robots to instructing robots,” says Sizhe Lester Li, MIT PhD scholar in electrical engineering and pc science, CSAIL affiliate, and lead researcher on the work. “In the present day, many robotics duties require in depth engineering and coding. Sooner or later, we envision exhibiting a robotic what to do, and letting it learn to obtain the purpose autonomously.”

The motivation stems from a easy however highly effective reframing: The primary barrier to reasonably priced, versatile robotics isn’t {hardware} — it’s management of functionality, which may very well be achieved in a number of methods. Conventional robots are constructed to be inflexible and sensor-rich, making it simpler to assemble a digital twin, a exact mathematical duplicate used for management. However when a robotic is gentle, deformable, or irregularly formed, these assumptions collapse. Moderately than forcing robots to match our fashions, NJF flips the script — giving robots the power to be taught their very own inside mannequin from remark.

Look and be taught

This decoupling of modeling and {hardware} design might considerably increase the design house for robotics. In gentle and bio-inspired robots, designers typically embed sensors or reinforce elements of the construction simply to make modeling possible. NJF lifts that constraint. The system doesn’t want onboard sensors or design tweaks to make management potential. Designers are freer to discover unconventional, unconstrained morphologies with out worrying about whether or not they’ll be capable of mannequin or management them later.

“Take into consideration the way you be taught to regulate your fingers: you wiggle, you observe, you adapt,” mentioned Li. “That’s what our system does. It experiments with random actions and figures out which controls transfer which elements of the robotic.”

The system has confirmed strong throughout a variety of robotic sorts. The workforce examined NJF on a pneumatic gentle robotic hand able to pinching and greedy, a inflexible Allegro hand, a 3D-printed robotic arm, and even a rotating platform with no embedded sensors. In each case, the system discovered each the robotic’s form and the way it responded to regulate alerts, simply from imaginative and prescient and random movement.

The researchers see potential far past the lab. Robots geared up with NJF might in the future carry out agricultural duties with centimeter-level localization accuracy, function on development websites with out elaborate sensor arrays, or navigate dynamic environments the place conventional strategies break down.

On the core of NJF is a neural community that captures two intertwined points of a robotic’s embodiment: its three-dimensional geometry and its sensitivity to regulate inputs. The system builds on neural radiance fields (NeRF), a method that reconstructs 3D scenes from photographs by mapping spatial coordinates to paint and density values. NJF extends this strategy by studying not solely the robotic’s form, but additionally a Jacobian area, a perform that predicts how any level on the robotic’s physique strikes in response to motor instructions.

To coach the mannequin, the robotic performs random motions whereas a number of cameras file the outcomes. No human supervision or prior information of the robotic’s construction is required — the system merely infers the connection between management alerts and movement by watching.

As soon as coaching is full, the robotic solely wants a single monocular digital camera for real-time closed-loop management, working at about 12 Hertz. This permits it to repeatedly observe itself, plan, and act responsively. That pace makes NJF extra viable than many physics-based simulators for gentle robots, which are sometimes too computationally intensive for real-time use.

In early simulations, even easy 2D fingers and sliders had been in a position to be taught this mapping utilizing just some examples. By modeling how particular factors deform or shift in response to motion, NJF builds a dense map of controllability. That inside mannequin permits it to generalize movement throughout the robotic’s physique, even when the information are noisy or incomplete.

“What’s actually fascinating is that the system figures out by itself which motors management which elements of the robotic,” mentioned Li. “This isn’t programmed — it emerges naturally by way of studying, very like an individual discovering the buttons on a brand new machine.”

The long run is gentle

For many years, robotics has favored inflexible, simply modeled machines — like the commercial arms present in factories — as a result of their properties simplify management. However the area has been shifting towards gentle, bio-inspired robots that may adapt to the actual world extra fluidly. The trade-off? These robots are more durable to mannequin.

“Robotics immediately typically feels out of attain due to pricey sensors and complicated programming. Our purpose with Neural Jacobian Fields is to decrease the barrier, making robotics reasonably priced, adaptable, and accessible to extra individuals. Imaginative and prescient is a resilient, dependable sensor,” mentioned senior writer and MIT assistant professor Vincent Sitzmann, who leads the Scene Illustration group. “It opens the door to robots that may function in messy, unstructured environments, from farms to development websites, with out costly infrastructure.”

“Imaginative and prescient alone can present the cues wanted for localization and management — eliminating the necessity for GPS, exterior monitoring methods, or advanced onboard sensors. This opens the door to strong, adaptive conduct in unstructured environments, from drones navigating indoors or underground with out maps to cellular manipulators working in cluttered properties or warehouses, and even legged robots traversing uneven terrain,” mentioned co-author Daniela Rus, MIT professor {of electrical} engineering and pc science and director of CSAIL. “By studying from visible suggestions, these methods develop inside fashions of their very own movement and dynamics, enabling versatile, self-supervised operation the place conventional localization strategies would fail.”

Whereas coaching NJF presently requires a number of cameras and should be redone for every robotic, the researchers are already imagining a extra accessible model. Sooner or later, hobbyists might file a robotic’s random actions with their telephone, very like you’d take a video of a rental automobile earlier than driving off, and use that footage to create a management mannequin, with no prior information or particular gear required.

The system doesn’t but generalize throughout completely different robots, and it lacks pressure or tactile sensing, limiting its effectiveness on contact-rich duties. However the workforce is exploring new methods to handle these limitations: enhancing generalization, dealing with occlusions, and increasing the mannequin’s potential to motive over longer spatial and temporal horizons.

“Simply as people develop an intuitive understanding of how their our bodies transfer and reply to instructions, NJF provides robots that type of embodied self-awareness by way of imaginative and prescient alone,” mentioned Li. “This understanding is a basis for versatile manipulation and management in real-world environments. Our work, basically, displays a broader pattern in robotics: shifting away from manually programming detailed fashions towards instructing robots by way of remark and interplay.”

Editor’s Word: This text was republished from MIT Information.