Amazon SageMaker Lakehouse is a unified, open, and safe information lakehouse that now seamlessly integrates with Amazon S3 Tables, the primary cloud object retailer with built-in Apache Iceberg assist. With this integration, SageMaker Lakehouse offers unified entry to S3 Tables, basic function Amazon S3 buckets, Amazon Redshift information warehouses, and information sources resembling Amazon DynamoDB or PostgreSQL. You’ll be able to then question, analyze, and be a part of the info utilizing Redshift, Amazon Athena, Amazon EMR, and AWS Glue. Along with your acquainted AWS providers, you may entry and question your information in-place along with your alternative of Iceberg-compatible instruments and engines, offering you the pliability to make use of SQL or Spark-based instruments and collaborate on this information the way in which you want. You’ll be able to safe and centrally handle your information within the lakehouse by defining fine-grained permissions with AWS Lake Formation which might be persistently utilized throughout all analytics and machine studying(ML) instruments and engines.

Organizations have gotten more and more information pushed, and as information turns into a differentiator in enterprise, organizations want sooner entry to all their information in all places, utilizing most popular engines to assist quickly increasing analytics and AI/ML use circumstances. Let’s take an instance of a retail firm that began by storing their buyer gross sales and churn information of their information warehouse for enterprise intelligence stories. With large development in enterprise, they should handle quite a lot of information sources in addition to exponential development in information quantity. The corporate builds an information lake utilizing Apache Iceberg to retailer new information resembling buyer critiques and social media interactions.

This permits them to cater to their finish prospects with new customized advertising and marketing campaigns and perceive its impression on gross sales and churn. Nonetheless, information distributed throughout information lakes and warehouses limits their capacity to maneuver shortly, as it could require them to arrange specialised connectors, handle a number of entry insurance policies, and sometimes resort to copying information, that may enhance price in each managing the separate datasets in addition to redundant information saved. SageMaker Lakehouse addresses these challenges by offering safe and centralized administration of knowledge in information lakes, information warehouses, and information sources resembling MySQL, and SQL Server by defining fine-grained permissions which might be persistently utilized throughout information in all analytics engines.

On this put up, we information you the right way to use varied analytics providers utilizing the mixing of SageMaker Lakehouse with S3 Tables. We start by enabling integration of S3 Tables with AWS analytics providers. We create S3 Tables and Redshift tables and populate them with information. We then arrange Amazon SageMaker Unified Studio by creating an organization particular area, new undertaking with customers, and fine-grained permissions. This lets us unify information lakes and information warehouses and use them with analytics providers resembling Athena, Redshift, Glue, and EMR.

Resolution overview

For instance the answer, we’re going to think about a fictional firm known as Instance Retail Corp. Instance Retail’s management is excited about understanding buyer and enterprise insights throughout 1000’s of buyer touchpoints for tens of millions of their prospects that may assist them construct gross sales, advertising and marketing, and funding plans. Management needs to conduct an evaluation throughout all their information to establish at-risk prospects, perceive impression of customized advertising and marketing campaigns on buyer churn, and develop focused retention and gross sales methods.

Alice is an information administrator in Instance Retail Corp who has launched into an initiative to consolidate buyer info from a number of touchpoints, together with social media, gross sales, and assist requests. She decides to make use of S3 Tables with Iceberg transactional functionality to realize scalability as updates are streamed throughout billions of buyer interactions, whereas offering similar sturdiness, availability, and efficiency traits that S3 is understood for. Alice already has constructed a big warehouse with Redshift, which comprises historic and present information about gross sales, prospects prospects, and churn info.

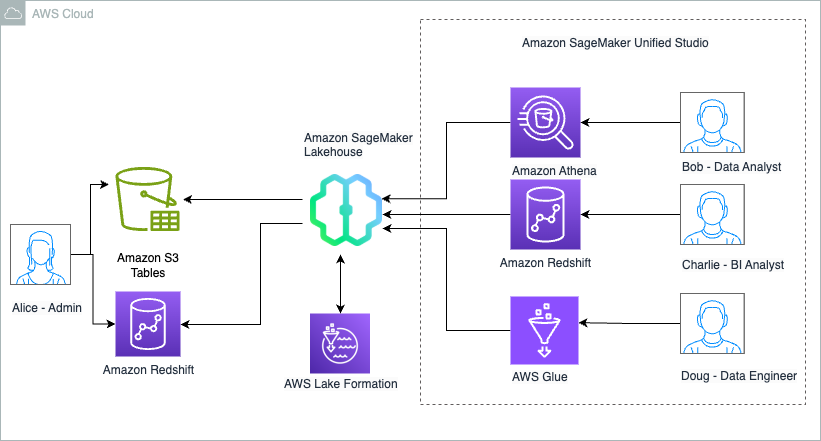

Alice helps an prolonged staff of builders, engineers, and information scientists who require entry to the info setting to develop enterprise insights, dashboards, ML fashions, and data bases. This staff consists of:

Bob, an information analyst who must entry to S3 Tables and warehouse information to automate constructing buyer interactions development and churn throughout varied buyer touchpoints for day by day stories despatched to management.

Charlie, a Enterprise Intelligence analyst who’s tasked to construct interactive dashboards for funnel of buyer prospects and their conversions throughout a number of touchpoints and make these accessible to 1000’s of Gross sales staff members.

Doug, an information engineer liable for constructing ML forecasting fashions for gross sales development utilizing the pipeline and/or buyer conversion throughout a number of touchpoints and make these accessible to finance and planning groups.

Alice decides to make use of SageMaker Lakehouse to unify information throughout S3 Tables and Redshift information warehouse. Bob is happy about this choice as he can now construct day by day stories utilizing his experience with Athena. Charlie now is aware of that he can shortly construct Amazon QuickSight dashboards with queries which might be optimized utilizing Redshift’s cost-based optimizer. Doug, being an open supply Apache Spark contributor, is happy that he can construct Spark based mostly processing with AWS Glue or Amazon EMR to construct ML forecasting fashions.

The next diagram illustrates the answer structure.

Implementing this answer consists of the next high-level steps. For Instance Retail, Alice as an information Administrator performs these steps:

- Create a desk bucket. S3 Tables shops Apache Iceberg tables as S3 assets, and buyer particulars are managed in S3 Tables. You’ll be able to then allow integration with AWS analytics providers, which routinely units up the SageMaker Lakehouse integration in order that the tables bucket is proven as a toddler catalog beneath the federated

s3tablescatalogwithin the AWS Glue Information Catalog and is registered with AWS Lake Formation for entry management. Subsequent, you create a desk namespace or database which is a logical assemble that you just group tables beneath and create a desk utilizing Athena SQL CREATE TABLE assertion. - Publish your information warehouse to Glue Information Catalog. Churn information is managed in a Redshift information warehouse, which is revealed to the Information Catalog as a federated catalog and is obtainable in SageMaker Lakehouse.

- Create a SageMaker Unified Studio undertaking. SageMaker Unified Studio integrates with SageMaker Lakehouse and simplifies analytics and AI with a unified expertise. Begin by creating a website and including all customers (Bob, Charlie, Doug). Then create a undertaking within the area, selecting undertaking profile that provisions varied assets and the undertaking AWS Identification and Entry Administration (IAM) function that manages useful resource entry. Alice provides Bob, Charlie, and Doug to the undertaking as members.

- Onboard S3 Tables and Redshift tables to SageMaker Unified Studio. To onboard the S3 Tables to the undertaking, in Lake Formation, you grant permission on the useful resource to the SageMaker Unified Studio undertaking function. This permits the catalog to be discoverable throughout the lakehouse information explorer for customers (Bob, Charlie, and Doug) to start out querying tables .SageMaker Lakehouse assets can now be accessed from computes like Athena, Redshift, and Apache Spark based mostly computes like Glue to derive churn evaluation insights, with Lake Formation managing the info permissions.

Stipulations

To observe the steps on this put up, you need to full the next stipulations:

Alice completes the next steps to create the S3 Desk bucket for the brand new information she plans so as to add/import into an S3 Desk.

- AWS account with entry to the next AWS providers:

- Amazon S3 together with S3 Tables

- Amazon Redshift

- AWS Identification and Entry Administration (IAM)

- Amazon SageMaker Unified Studio

- AWS Lake Formation and AWS Glue Information Catalog

- AWS Glue

- Create a consumer with administrative entry.

- Have entry to an IAM function that may be a Lake Formation information lake administrator. For directions, seek advice from Create an information lake administrator.

- Allow AWS IAM Identification Middle in the identical AWS Area the place you wish to create your SageMaker Unified Studio area. Arrange your identification supplier (IdP) and synchronize identities and teams with AWS IAM Identification Middle. For extra info, seek advice from IAM Identification Middle Identification supply tutorials.

- Create a read-only administrator function to find the Amazon Redshift federated catalogs within the Information Catalog. For directions, seek advice from Stipulations for managing Amazon Redshift namespaces within the AWS Glue Information Catalog.

- Create an IAM function named

DataTransferRole. For directions, seek advice from Stipulations for managing Amazon Redshift namespaces within the AWS Glue Information Catalog. - Create an Amazon Redshift Serverless namespace known as

churnwg. For extra info, see Get began with Amazon Redshift Serverless information warehouses.

Create a desk bucket and allow integration with analytics providers

Alice completes the next steps to create the S3 Desk bucket for the brand new information she plans so as to add/import into an S3 Tables.

Comply with the under steps to create a desk bucket to allow integration with SageMaker Lakehouse:

- Sign up to the S3 console as consumer created in prerequisite step 2.

- Select Desk buckets within the navigation pane and select Allow integration.

- Select Desk buckets within the navigation pane and select Create desk bucket.

- For Desk bucket identify, enter a reputation resembling

blog-customer-bucket. - Select Create desk bucket.

- Select Create desk with Athena.

- Choose Create a namespace and supply a namespace (for instance,

customernamespace). - Select Create namespace.

- Select Create desk with Athena.

- On the Athena console, run the next SQL script to create a desk:

That is simply an instance of including a number of rows to the desk, however usually for manufacturing use circumstances, prospects use engines resembling Spark so as to add information to the desk.

S3 Tables buyer is now created, populated with information and built-in with SageMaker Lakehouse.

Arrange Redshift tables and publish to the Information Catalog

Alice completes the next steps to attach the info in Redshift to be revealed into the info catalog. We’ll additionally show how the Redshift desk is created and populated, however in Alice’s case Redshift desk already exists with all of the historic information on gross sales income.

- Sign up to the Redshift endpoint

churnwgas an admin consumer. - Run the next script to create a desk beneath the

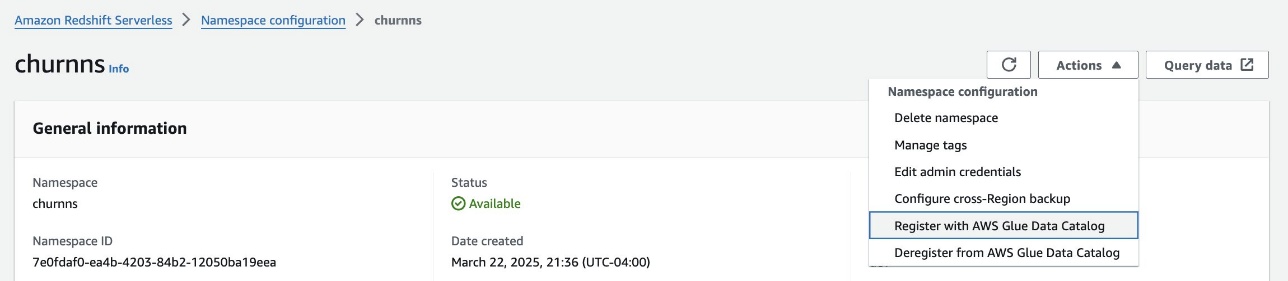

devdatabase beneath the general public schema: - On the Redshift Serverless console, navigate to the namespace.

- On the Motion dropdown menu, select Register with AWS Glue Information Catalog to combine with SageMaker Lakehouse.

- Select Register.

- Sign up to the Lake Formation console as the info lake administrator.

- Beneath Information Catalog within the navigation pane, select Catalogs and Pending catalog invites.

- Choose the pending invitation and select Approve and create catalog.

- Present a reputation for the catalog (for instance,

churn_lakehouse). - Beneath Entry from engines, choose Entry this catalog from Iceberg-compatible engines and select

DataTransferRolefor the IAM function. - Select Subsequent.

- Select Add permissions.

- Beneath Principals, select the

datalakeadminfunction for IAM customers and roles, Tremendous consumer for Catalog permissions, and select Add. - Select Create catalog.

Redshift Desk customer_churn is now created, populated with information and built-in with SageMaker Lakehouse.

Create a SageMaker Unified Studio area and undertaking

Alice now units up SageMaker Unified Studio area and tasks in order that she will carry customers (Bob, Charlie and Doug) collectively within the new undertaking.

Full the next steps to create a SageMaker area and undertaking utilizing SageMaker Unified Studio:

- On the SageMaker Unified Studio console, create a SageMaker Unified Studio area and undertaking utilizing the All Capabilities profile template. For extra particulars, seek advice from Establishing Amazon SageMaker Unified Studio. For this put up, we create a undertaking named

churn_analysis. - Setup AWS Identification heart with customers Bob, Charlie and Doug, Add them to area and undertaking.

- From SageMaker Unified Studio, navigate to the undertaking overview and on the Mission particulars tab, observe the undertaking function Amazon Useful resource Title (ARN).

- Sign up to the IAM console as an admin consumer.

- Within the navigation pane, select Roles.

- Seek for the undertaking function and add AmazonS3TablesReadOnlyAccess by selecting Add permissions.

SageMaker Unified Studio is now setup with area, undertaking and customers.

Onboard S3 Tables and Redshift tables to the SageMaker Unified Studio undertaking

Alice now configures SageMaker Unified Studio undertaking function for fine-grained entry management to find out who on her staff will get to entry what information units.

Grant the undertaking function full desk entry on buyer dataset. For that, full the next steps:

- Sign up to the Lake Formation console as the info lake administrator.

- Within the navigation pane, select Information lake permissions, then select Grant.

- Within the Principals part, for IAM customers and roles, select the undertaking function ARN famous earlier.

- Within the LF-Tags or catalog assets part, choose Named Information Catalog assets:

- Select

:s3tablescatalog/blog-customer-bucket - Select

customernamespacefor Databases. - Select buyer for Tables.

- Select

- Within the Desk permissions part, choose Choose and Describe for permissions.

- Select Grant.

Now grant the undertaking function entry to subset of columns from customer_churn dataset.

- Within the navigation pane, select Information lake permissions, then select Grant.

- Within the Principals part, for IAM customers and roles, select the undertaking function ARN famous earlier.

- Within the LF-Tags or catalog assets part, choose Named Information Catalog assets:

- Select

:churn_lakehouse/dev - Select public for Databases.

- Select

customer_churnfor Tables.

- Select

- Within the Desk Permissions part, choose Choose.

- Within the Information Permissions part, choose Column-based entry.

- For Select permission filter, choose Embrace columns and select

customer_id,internet_service, andis_churned. - Select Grant.

All customers within the undertaking churn_analysis in SageMaker Unified Studio at the moment are setup. They’ve entry to all columns within the desk and fine-grained entry permissions for Redshift desk the place they’ve entry to solely three columns.

Confirm information entry in SageMaker Unified Studio

Alice can now do a closing verification if the info is all accessible to make sure that every of her staff members are set as much as entry the datasets.

Now you may confirm information entry for various customers in SageMaker Unified Studio.

- Sign up to SageMaker Unified Studio as Bob and select the

churn_analysis - Navigate to the Information explorer to view

s3tablescatalogandchurn_lakehousebeneath Lakehouse.

Information Analyst makes use of Athena for analyzing buyer churn

Bob, the info analyst can now logs into to the SageMaker Unified Studio, chooses the churn_analysis undertaking and navigates to the Construct choices and select Question Editor beneath Information Evaluation & Integration.

Bob chooses the connection as Athena (Lakehouse), the catalog as s3tablescatalog/blog-customer-bucket, and the database as customernamespace. And runs the next SQL to research the info for buyer churn:

Bob can now be a part of the info throughout S3 Tables and Redshift in Athena and now can proceed to construct full SQL analytics functionality to automate constructing buyer development and churn management day by day stories.

BI Analyst makes use of Redshift engine for analyzing buyer information

Charlie, the BI Analyst can now logs into the SageMaker Unified Studio and chooses the churn_analysis undertaking. He navigates to the Construct choices and select Question Editor beneath Information Evaluation & Integration. He chooses the connection as Redshift (Lakehouse), Databases as dev, Schemas as public.

He then runs the observe SQL to carry out his particular evaluation.

Charlie can now additional replace the SQL question and use it to energy QuickSight dashboards that may be shared with Gross sales staff members.

Charlie can now additional replace the SQL question and use it to energy QuickSight dashboards that may be shared with Gross sales staff members.

Information engineer makes use of AWS Glue Spark engine to course of buyer information

Lastly, Doug logs in to SageMaker Unified Studio as Doug and chooses the churn_analysis undertaking to carry out his evaluation. He navigates to the Construct choices and select JupyterLab beneath IDE & Functions. He downloads the churn_analysis.ipynb pocket book and add it into the explorer. He then runs the cells by deciding on compute as undertaking.spark.compatibility.

He runs the next SQL to research the info for buyer churn:

Doug, now can use Spark SQL and begin processing information from each S3 tables and Redshift tables and begin constructing forecasting fashions for buyer development and churn

Cleansing up

In case you carried out the instance and wish to take away the assets, full the next steps:

- Clear up S3 Tables assets:

- Clear up the Redshift information assets:

- On the Lake Formation console, select Catalogs within the navigation pane.

- Delete the

churn_lakehousecatalog.

- Delete SageMaker undertaking, IAM roles, Glue assets, Athena workgroup, S3 buckets created for area.

- Delete SageMaker area and VPC created for the setup.

Conclusion

On this put up, we confirmed how you should utilize SageMaker Lakehouse to unify information throughout S3 Tables and Redshift information warehouses, which may help you construct highly effective analytics and AI/ML functions on a single copy of knowledge. SageMaker Lakehouse offers you the pliability to entry and question your information in-place with Iceberg-compatible instruments and engines. You’ll be able to safe your information within the lakehouse by defining fine-grained permissions which might be enforced throughout analytics and ML instruments and engines.

For extra info, seek advice from Tutorial: Getting began with S3 Tables, S3 Tables integration, and Connecting to the Information Catalog utilizing AWS Glue Iceberg REST endpoint. We encourage you to check out the S3 Tables integration with SageMaker Lakehouse integration and share your suggestions with us.

Concerning the authors

Sandeep Adwankar is a Senior Technical Product Supervisor at AWS. Primarily based within the California Bay Space, he works with prospects across the globe to translate enterprise and technical necessities into merchandise that allow prospects to enhance how they handle, safe, and entry information.

Sandeep Adwankar is a Senior Technical Product Supervisor at AWS. Primarily based within the California Bay Space, he works with prospects across the globe to translate enterprise and technical necessities into merchandise that allow prospects to enhance how they handle, safe, and entry information.

Srividya Parthasarathy is a Senior Massive Information Architect on the AWS Lake Formation staff. She works with the product staff and prospects to construct sturdy options and options for his or her analytical information platform. She enjoys constructing information mesh options and sharing them with the group.

Srividya Parthasarathy is a Senior Massive Information Architect on the AWS Lake Formation staff. She works with the product staff and prospects to construct sturdy options and options for his or her analytical information platform. She enjoys constructing information mesh options and sharing them with the group.

Aditya Kalyanakrishnan is a Senior Product Supervisor on the Amazon S3 staff at AWS. He enjoys studying from prospects about how they use Amazon S3 and serving to them scale efficiency. Adi’s based mostly in Seattle, and in his spare time enjoys mountain climbing and sometimes brewing beer.

Aditya Kalyanakrishnan is a Senior Product Supervisor on the Amazon S3 staff at AWS. He enjoys studying from prospects about how they use Amazon S3 and serving to them scale efficiency. Adi’s based mostly in Seattle, and in his spare time enjoys mountain climbing and sometimes brewing beer.